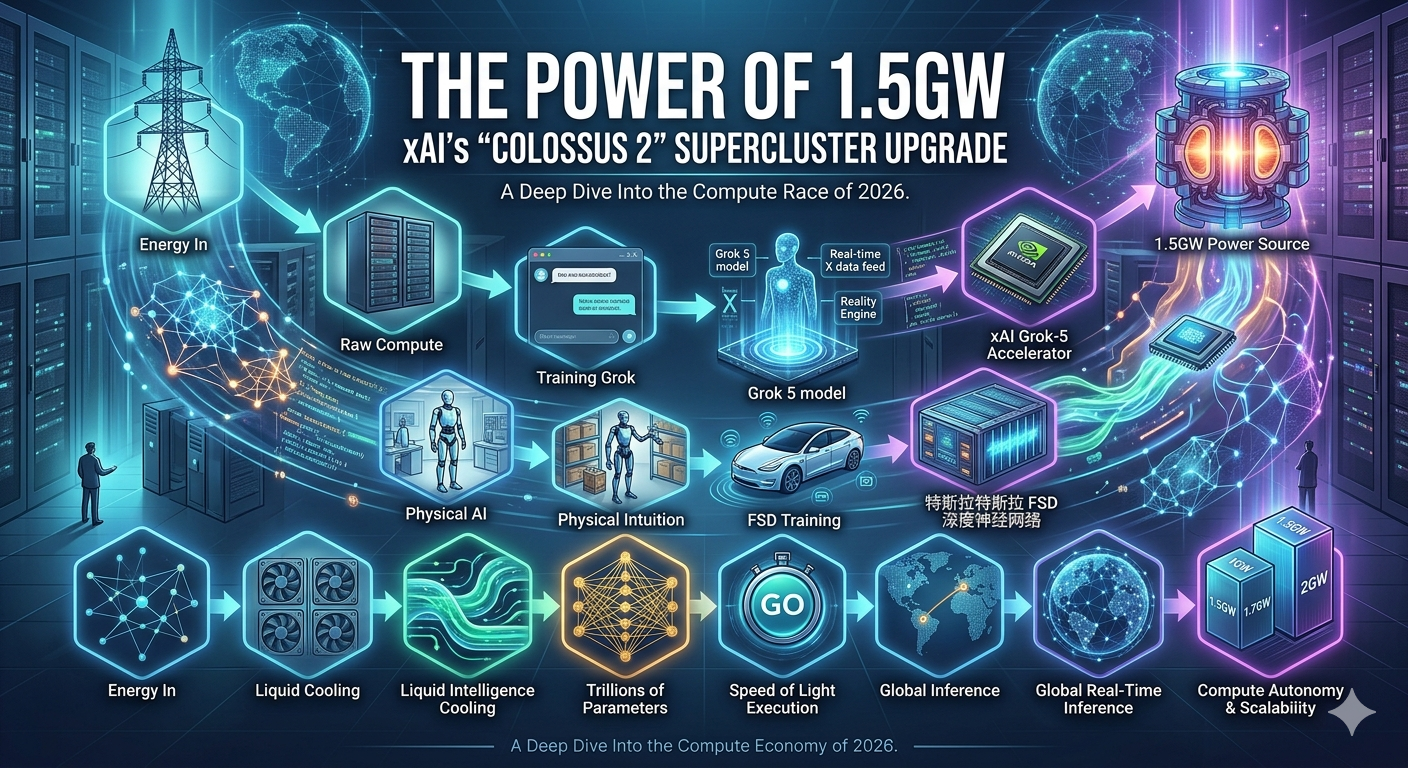

The Power of 1.5GW: xAI’s "Colossus 2" Supercluster Upgrade

In the world of AI, compute is the ultimate currency, and Elon Musk’s xAI just broke the bank. As of April 2026, the "Colossus 2" supercluster has officially reached its massive 1.5-gigawatt (GW) power milestone, solidifying its position as the most powerful AI training hub on the planet.

To put that in perspective: 1.5GW is enough electricity to power roughly 1.1 million homes or surpass the peak energy demand of the entire city of San Francisco.

Why the 1.5GW Upgrade Matters

The jump from the initial 1GW launch in January to 1.5GW this April isn't just a technical flex—it's the fuel required for Grok 5.

Training at Scale: Colossus 2 now houses over 550,000 NVIDIA GPUs (including the latest Blackwell GB200 and GB300 chips). This density allows xAI to train models with trillions of parameters at speeds that were unthinkable just 12 months ago.

The "Reality Engine": With this level of compute, Grok 5 is moving beyond simple text prediction. It is being trained on a "Reality Engine" that cross-references 500 million daily posts on X and hundreds of millions of hours of live video to verify "truth" in real-time.

Physical Intuition: This massive cluster is also being used to bridge the gap between AI and robotics, providing the "brainpower" for Tesla’s Optimus and FSD (Full Self-Driving) by training on millions of hours of real-world physical dynamics.

Hacking the Infrastructure Timeline

While competitors are often slowed down by multi-year waits for utility grid upgrades, xAI has bypassed the bottleneck through Energy Autonomy:

On-Site Generation: The Memphis site utilizes massive on-site natural gas turbines and Tesla Megapacks for battery storage.

Rapid Expansion: By acquiring a third building (nicknamed "MACROHARDRR"), xAI is already looking toward a 2GW total capacity later this year.

The Bottom Line

In April 2026, xAI has proved that speed of execution is its greatest competitive advantage. While other labs are still in the planning phases for gigawatt-scale data centers, Colossus 2 is already online, humming at 1.5GW, and rewriting the rules of what an AI model can achieve.