Memory Bandwidth Bottlenecks: HBM4 and the Future of AI Compute

The fastest processors on earth are standing in line, waiting for their next instruction.

We are living in an era of unprecedented computational power. In early 2026, we marvel at GPUs that execute quadrillions of calculations per second. Yet, there is a fundamental imbalance. While computation speed has skyrocketed, the speed at which data travels from memory to that processor has lagged far behind.

This is the Memory Bandwidth Bottleneck, and it is currently the single greatest obstacle to achieving truly scalable, multi-trillion-parameter Artificial Intelligence.

The Tyranny of the Interconnect

The equation is simple: AI requires moving immense datasets. Whether you are training an emergent reasoning model like GPT-5 or running thousands of agentic inference workflows simultaneously, the "weights" of the model must be accessed constantly.

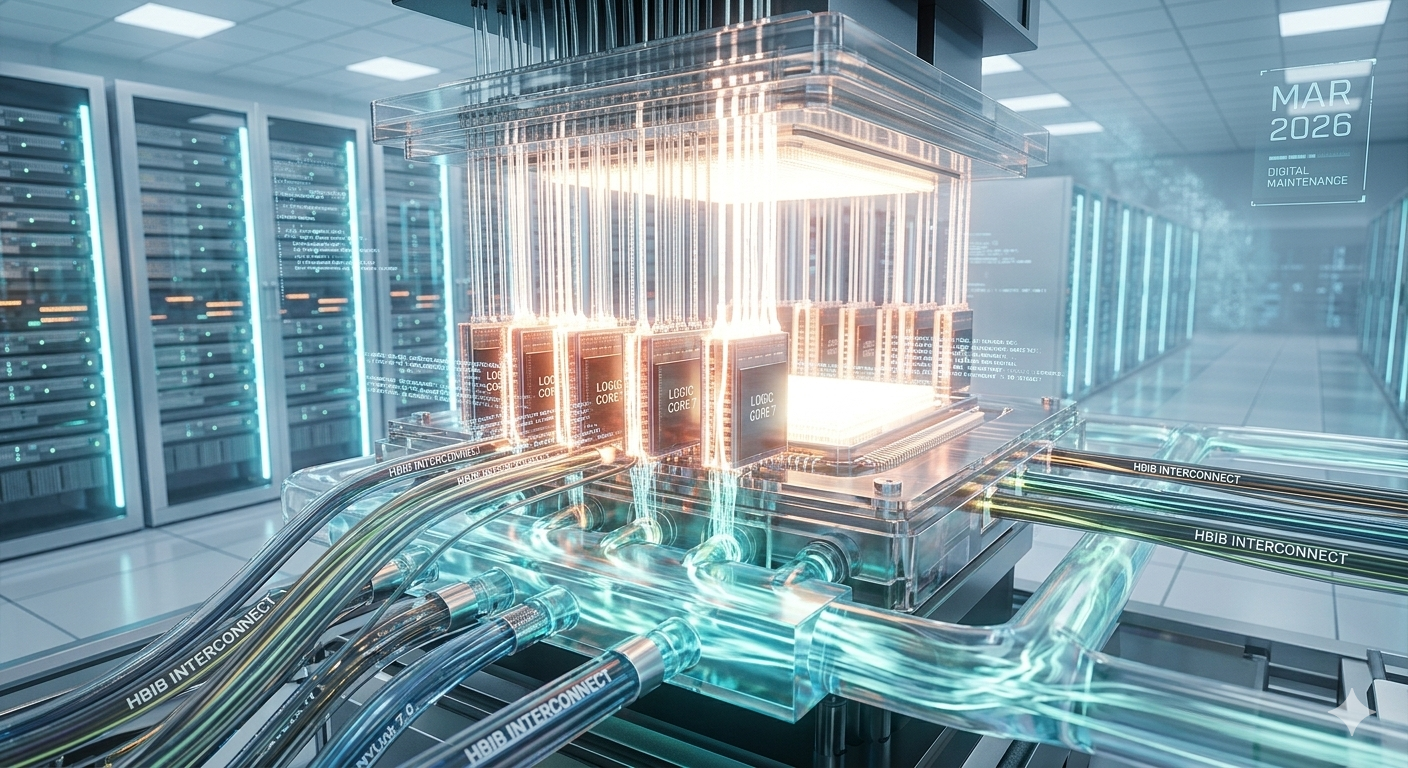

A standard HBM3e chip, which was cutting-edge only a year ago, can become a bottleneck when paired with 2026-era processors pushing towards 1,000-watt, ultra-dense configurations. The logic is moving data faster than the interconnect can supply it. This is why when you look inside a next-generation data center rack (like the one pictured), the complexity isn't just in the compute modules, but in the dense jungle of transparent tubes designed to manage the heat of data moving at blinding speeds.

This bottleneck forces data centers into inefficiency. Processors must wait—"stalls" occur—increasing latency, wasted energy, and training costs.

Enter HBM4: Breaking the Wall

This month, the defining topic across data center infrastructure is the finalization of High Bandwidth Memory 4 (HBM4). This isn't just an incremental speed boost; it is a foundational change in architecture.

HBM4 shifts from the traditional 2.5D integration to complex 3D-stacked memory. In this configuration, the DRAM layers are stacked directly on top of the logic die, using microscopic vertical interconnects (TSVs, or Through-Silicon Vias) to transmit signals. This massive increase in connection points shatters previous density limits, offering a pathway to bandwidths that exceed 2 terabytes per second per stack.

When scaled across a full server rack, this architectural pivot will, for the first time, allow memory speeds to finally keep pace with logic.

Implication: Total System Integration

The significance of HBM4 goes beyond the raw numbers. It is driving the trend toward liquid-cooled, integrated systems.

By eliminating the physical distance between memory and compute, 3D stacking reduces signal energy requirements, but it drastically increases heat density. The solution is the intricate liquid cooling architecture that dominates modern infrastructure design. These systems use highly sophisticated coolant manifolds to distribute thermal management directly to the point of maximum heat—where the memory meets the logic.

The future of AI compute is no longer about the fastest chip. It is about engineering a balanced system where memory, interconnect, and cooling work in frictionless harmony. HBM4 is the technology that will make that balance possible, unlocking the next order of magnitude in AI performance.