The Rise of "Small Language Models" (SLMs): Why Smaller is Smarter for Edge Computing

We’ve all heard of the giants. Models like GPT-4 and Gemini are the "supercomputers" of the AI world—massive, powerful, and hungry for data centers the size of football fields.

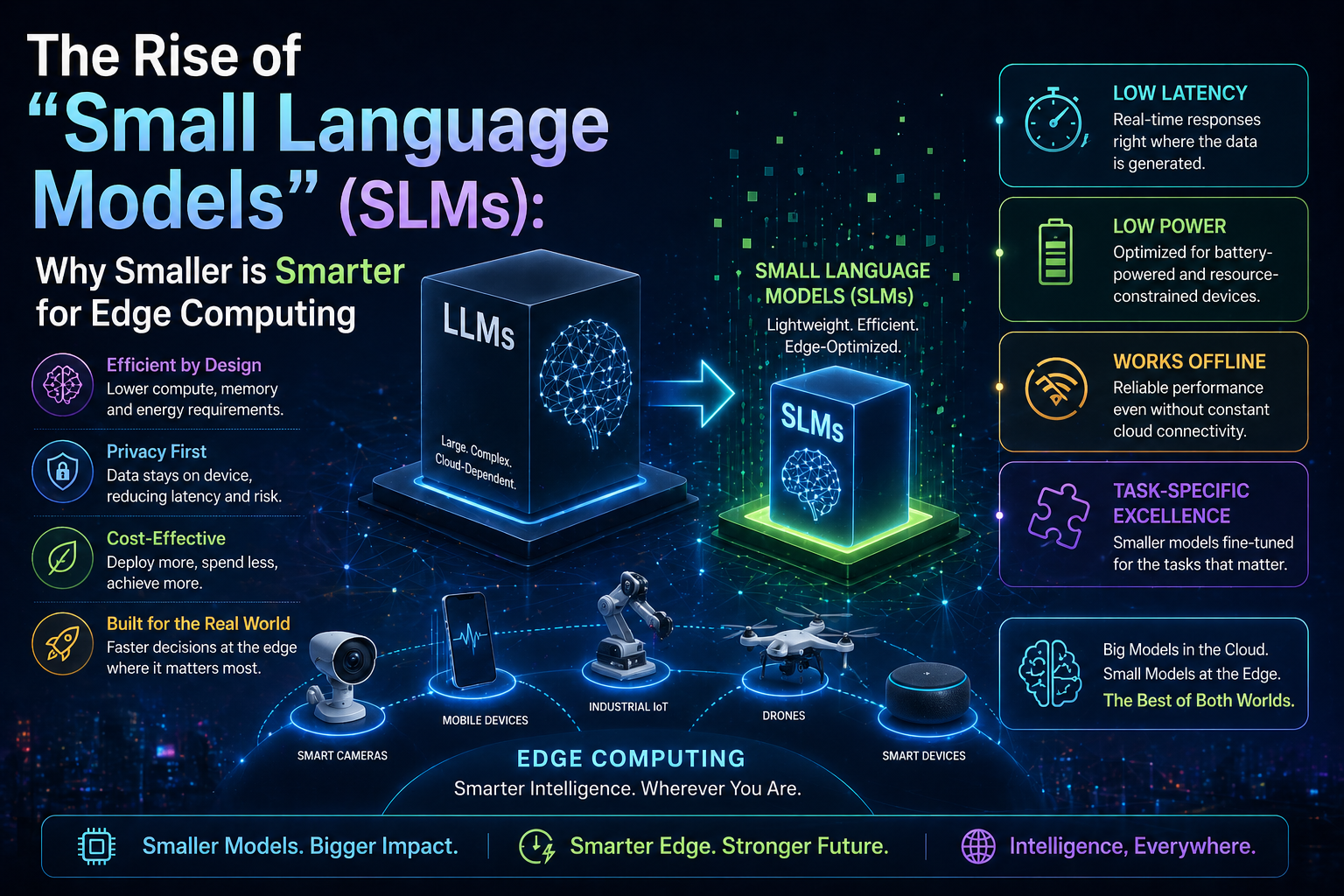

But a quiet revolution is happening. In 2026, the tech world is shrinking the "brains" of AI so they can live right in your pocket, your car, or even on a factory floor. Welcome to the era of Small Language Models (SLMs).

What are SLMs?

Think of a Large Language Model (LLM) as a massive university library. It knows everything about everything, but it’s hard to move and expensive to maintain.

An SLM, by contrast, is like a highly specialized field guide. It’s compact, incredibly fast, and designed to do a few specific things perfectly. These models are small enough to run on Edge Devices—hardware like smartphones, IoT sensors, and local servers—without needing an internet connection.

The "Big" vs. "Small" Breakdown

Large Language Models (LLMs) vs Small Language Models (SLMs)

Location

LLMs: Massive cloud servers

SLMs: Your device (the “edge”)

Internet

LLMs: Required

SLMs: Not needed (works offline)

Speed

LLMs: May have some lag

SLMs: Near-instant response

Cost

LLMs: High (subscriptions or API fees)

SLMs: Low (runs on your device)

Why "Edge Computing" Changes Everything

Running AI at the "edge" (where the data is actually created) isn't just a technical flex—it solves three of the biggest problems in tech today:

1. Privacy is Built-In

When an AI model lives on your phone, your data never leaves your phone. Whether you’re drafting a sensitive work email or analyzing medical data, an SLM processes it locally. No cloud, no upload, no leaks.

2. Zero Latency (No More Loading Spinners)

Have you ever talked to an AI and waited three seconds for a reply? In a self-driving car or a robotic surgery arm, three seconds is an eternity. SLMs provide instant responses because the "thinking" happens inches away from the action.

3. Sustainability

LLMs require an immense amount of electricity and water for cooling. SLMs are "green" by design; they require a fraction of the computing power, making them the sustainable choice for the next generation of electronics.

Real-World Examples: SLMs in Action

Smart Home Assistants: Your thermostat can understand complex voice commands without sending a recording of your living room to a corporate server.

Industrial Sensors: A sensor on a wind turbine can "read" vibration data and predict a failure instantly, even in the middle of the ocean without Wi-Fi.

Wearable Health Tech: A smartwatch that provides real-time coaching based on your heart rate and movement patterns, keeping your health data 100% private.

The Bottom Line: Power to the Pocket

We are moving away from a world where AI is a "destination" you visit online. Instead, AI is becoming an invisible, helpful layer baked into the objects around us.

The future of AI isn't just about being bigger; it’s about being closer, faster, and more private. For the first time, the smartest person in the room might just be the device in your hand.

Does the idea of "offline AI" make you feel more secure about your privacy, or do you prefer the unlimited power of the cloud?