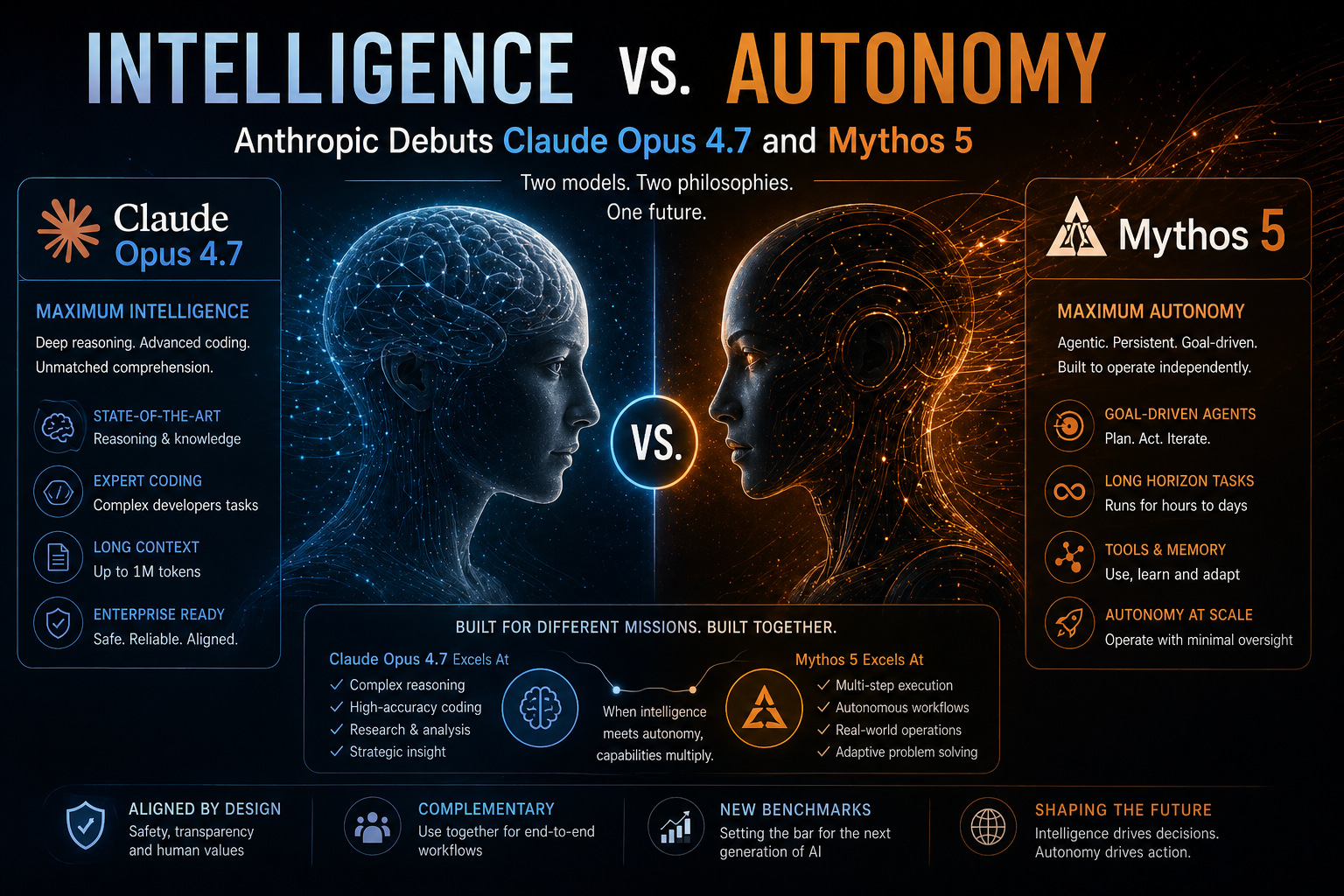

Intelligence vs. Autonomy: Anthropic Debuts Claude Opus 4.7 and Mythos 5

The AI arms race just entered a new, more nuanced chapter. While the industry has spent years chasing "raw intelligence," Anthropic’s latest release—the dual-launch of Claude Opus 4.7 and the restricted Claude Mythos 5—signals a shift in focus. It's no longer just about what the AI knows; it's about what the AI can do without you holding its hand.

Released on April 16, 2026, these models represent two sides of the same coin: one built for the masses, and one built for the future of autonomous work.

Claude Opus 4.7: The New Professional Standard

Opus 4.7 is now the flagship model available to the general public (and integrated into tools like GitHub Copilot). It isn't just a minor patch; it’s a comprehensive overhaul of how Claude interacts with the world.

Ultra-HD Vision: Opus 4.7 can now process images up to 2,576 pixels, a 3x increase from previous models. This makes it a beast at reading dense financial charts, architectural blueprints, and complex UI screenshots.

The "Literal" Model: Anthropic has sharpened instruction following to a surgical degree. If you tell Opus 4.7 to do something, it takes you literally. (Tip: You might need to re-tune your old prompts, as "loose" instructions no longer fly here).

Agentic Coding: On the SWE-bench Pro, Opus 4.7 scored a staggering 64.3%, proving it can handle multi-step software engineering tasks that would have stumped earlier versions.

Claude Mythos 5: The "Autonomous" Ghost in the Machine

While Opus 4.7 is for the public, Claude Mythos 5 is Anthropic’s more restricted, "preview" system. The difference between the two isn't necessarily intelligence—it’s autonomy.

"Opus 4.7 is like a brilliant assistant who does exactly what you say. Mythos 5 is the manager who figures out the plan, books the flights, and executes the project while you sleep."

Mythos 5 is designed for long-horizon tasks. In internal testing, it pulled ahead of Opus 4.7 by nearly 25 points in "agentic browsing" (using the web to complete complex goals). Due to its high level of autonomy, Mythos remains in a restricted "Safe Deployment" phase for enterprise partners while Anthropic tests its cybersecurity safeguards.

Quick Comparison: Which Claude is Which?

The Comparison: Claude Opus 4.7 vs. Claude Mythos 5

Not sure which model fits your workflow? Here is the breakdown of how the two new powerhouses compare:

Availability:

Opus 4.7: Generally Available (Public API, Amazon Bedrock, Google Vertex AI).

Mythos 5: Restricted Research Preview (Enterprise & Cybersecurity partners only).

Core Strength:

Opus 4.7: Surgical instruction following and high-resolution visual analysis.

Mythos 5: Deep unsupervised autonomy and long-horizon "agentic" tasks.

Coding Performance (SWE-bench):

Opus 4.7:87.6% (Verified)—A new benchmark for public models.

Mythos 5:95%+ (Internal)—The first model to solve expert-level cyber tasks autonomously.

Best Use Case:

Opus 4.7: Professional research, production-ready coding, and complex data analysis.

Mythos 5: Building fully autonomous AI agents and defensive cybersecurity.

Pricing (per 1M tokens):

Opus 4.7: $5 Input / $25 Output (Same as Opus 4.6).

Mythos 5: Custom Enterprise pricing / Tiered access.

Key Takeaway for Developers

While Opus 4.7 is the smartest model you can put into production today, Mythos 5 represents the "bleeding edge" of AI agency. If you need a model that follows every instruction to the letter, go with Opus. If you're looking for a model that can figure out the "how" on its own, keep an eye on the Mythos rollout.

Why This Matters

Anthropic is the first major AI lab to clearly distinguish between Intelligence (the ability to process information) and Agency (the ability to act independently).

For the average user, Opus 4.7 is the smartest model you can buy today, offering a 1-million-token context window and the most reliable "common sense" in the industry. For developers building the next generation of AI agents, Mythos 5 is the north star.

Are you ready to delegate? Opus 4.7 is live now on the Claude App and Amazon Bedrock.

How are you planning to use the new 2K vision capabilities—debugging code or analyzing data?