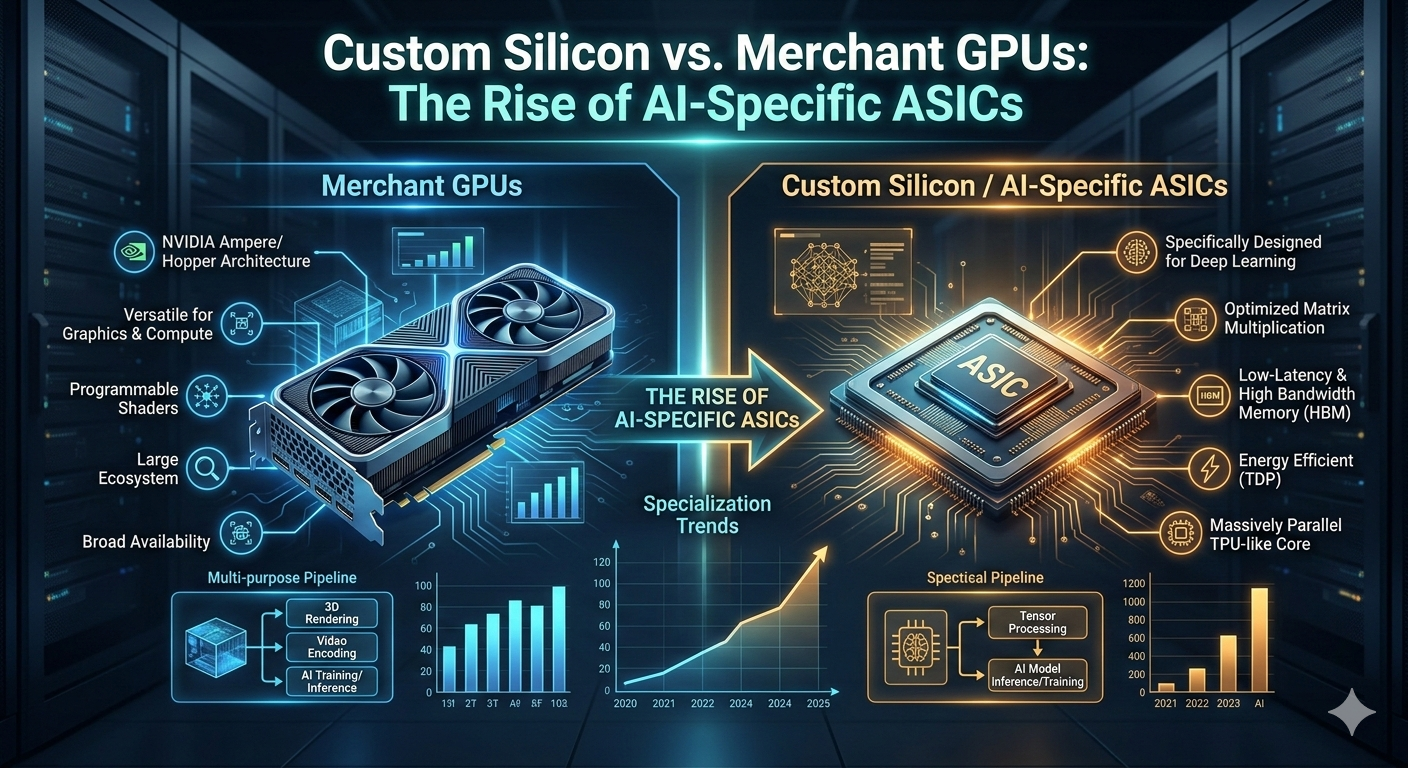

Custom Silicon vs. Merchant GPUs: The Rise of AI-Specific ASICs

Forget the "same old" story of generic hardware—the silicon landscape is being torn down and rebuilt from the substrate up. For decades, the tech world bowed to the GPU as the undisputed king of computing. But as AI models grow more massive and power-hungry, a new rebellion is brewing in the server racks: the rise of the AI-Specific ASIC (Application-Specific Integrated Circuit).

We are moving away from the "one size fits all" era of merchant silicon and into a world where the biggest players on the planet are designing their own chips to win the AI arms race.

The Contenders: Merchant GPUs vs. Custom ASICs

To understand why this shift is happening, we have to look at the fundamental difference between a general-purpose tool and a specialized weapon.

Merchant GPUs (e.g., NVIDIA H100)

Versatility: High. These chips are built to handle everything from high-end gaming and 3D rendering to AI.

Efficiency: Moderate. Because they are "jacks-of-all-trades," they carry "legacy weight" and extra circuitry that isn't needed for AI.

Availability: Subject to massive market shortages and the high "NVIDIA tax."

Custom AI ASICs (e.g., Google TPU, AWS Trainium)

Versatility: Low. These are hard-wired specifically for the complex math required by neural networks.

Efficiency: Extreme. Every single transistor is dedicated to AI throughput, meaning they do one thing perfectly.

Cost & Power: High upfront R&D cost, but massive long-term savings on electricity and hardware scaling.

Why Custom Silicon is Taking Over

1. The Death of "Overhead"

A standard GPU is like a Swiss Army knife—it’s great because it has a blade, a screwdriver, and scissors. But if your only job is to cut bread all day, you don’t need the extra weight. Custom ASICs strip away the unneeded parts, focusing entirely on Matrix Multiplication, the mathematical heartbeat of AI. This makes them significantly faster and cooler.

2. The Power Wall

Data centers are hitting physical limits on electricity draw. Merchant GPUs are notorious power-hogs. By designing custom silicon like Amazon’s Inferentia, companies can squeeze 3x to 5x more performance out of every watt. In the world of "Green AI," efficiency is the ultimate competitive advantage.

3. Software-Hardware Co-Design

When a company builds both the chip and the AI framework (like Google does with TPUs and TensorFlow), they work in perfect harmony. This "vertical integration" allows for software tricks that generic hardware simply can't support, dropping training times from weeks to hours.

The Major Players and Their Secret Silicon

The shift isn't just happening in labs; it's powering the apps you use every day:

Google (TPU): The pioneer. Their Tensor Processing Units have been the backbone of Google Search and Translate for years.

AWS (Trainium & Inferentia): Amazon is aggressively moving its cloud customers onto its own chips to lower costs and bypass global GPU shortages.

Meta (MTIA): To power the recommendation engines of Instagram and Facebook, Meta developed their own accelerator to handle unique "ranking" workloads.

Microsoft (Maia): The latest titan to join the fray, designing custom silicon specifically to run the massive Large Language Models (LLMs) behind Azure AI.

The Bottom Line

The "Rise of the ASICs" signifies a fragmenting of the hardware market. While NVIDIA remains a titan due to its software ecosystem, the world’s largest tech companies are no longer willing to wait in line for generic chips.

By building their own "brain tissue," they are gaining total control over their AI destiny. We are entering an era where the most powerful AI won't just be the one with the best code, but the one with the most specialized silicon.