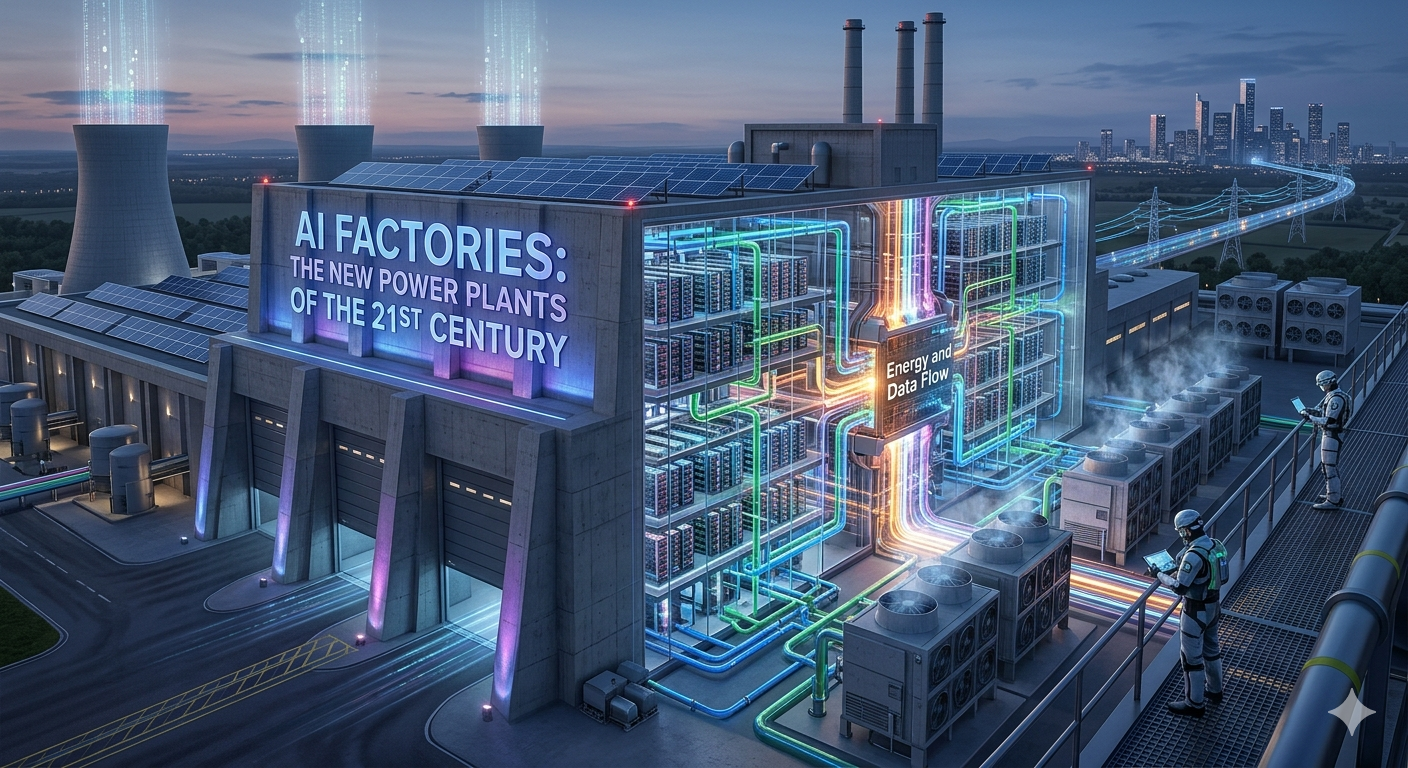

AI Factories: The New Power Plants of the 21st Century

Introduction: From Storage to Production

For decades, data centers were the "digital filing cabinets" of the world. They were built for stability, designed to host websites, and store trillions of emails. But in 2026, the demand for generative and agentic AI has outstripped the capabilities of traditional infrastructure.

Enter the AI Factory. As NVIDIA CEO Jensen Huang famously noted, we have moved from an era of "retrieving" information to an era of "producing" it. The AI Factory doesn't just hold data; it takes raw data as a "raw material" and uses massive computational power to refine it into a finished product: intelligence.

1. What Makes a "Factory" Different from a "Data Center"?

The shift isn't just semantic; it’s architectural. Traditional data centers rely on CPUs for sequential tasks. AI Factories are built around Accelerated Computing (GPUs and TPUs) designed for massive parallelism.

Feature Traditional Data CenterAI Factory

Primary Goal Storing & moving data Manufacturing intelligence (tokens)

Core Hardware CPUs (Central Processing Units) GPUs/TPUs (Accelerated Computing)

Cooling Standard Air Cooling Liquid Cooling (Direct-to-Chip)

Connectivity North-South (User to Server) East-West (Server to Server/Parallel)

Power Density 10–20 kW per rack 100 kW+ per rack

2. The Five-Layer Stack of the AI Economy

In 2026, we view AI infrastructure as a "five-layer cake." To understand the AI economy, you must understand how these layers interact:

Energy: The first principle. Intelligence is the result of electrons moving. Sustainable energy (nuclear, hydrogen, and solar) is now the primary constraint on AI growth.

Chips: The specialized processors (like the Blackwell Ultra or custom hyperscaler silicon) that turn energy into math.

Infrastructure: The AI Factory itself—the physical building, liquid cooling, and high-speed networking (InfiniBand/Spectrum-X) that treats 100,000 GPUs as a single computer.

Models: The "engines" (LLMs, LMMs, and Physical AI) that process information.

Applications: The interface where the intelligence is finally consumed by the end-user.

3. Why AI Factories are the New "Sovereign" Infrastructure

Nations are now treating AI Factories like they treat power grids or water systems. We are seeing the rise of Sovereign AI, where countries like Singapore, France, and Japan are building their own AI Factories to ensure their data, culture, and intelligence production remain within their borders.

Economic Impact: Countries with the highest "intelligence throughput" will lead in drug discovery, autonomous manufacturing, and financial services.

The Data Flywheel: Unlike traditional factories, AI Factories get more efficient over time. As they produce intelligence, that intelligence helps refine the next generation of models, creating a self-sustaining loop of innovation.

4. The 2026 Outlook: Inference at the Edge

While 2024 and 2025 were the years of training (building the models), 2026 is the year of inference (running them).

This is shifting the geography of AI Factories. While massive "Gigafactories" handle the heavy lifting of training, smaller "Edge AI Factories" are popping up in Tier 2 cities and industrial hubs. This reduces latency, allowing for real-time AI reasoning in autonomous vehicles and robotic surgical suites.

Investing in the "Intelligence Engine"

The transition to AI Factories represents the largest infrastructure investment supercycle in modern history. We are no longer just "going to the cloud"; we are building the engines that will power the next century of human progress.

"AI runs on real hardware, real energy, and real economics. It takes raw materials and converts them into intelligence at scale." — Jensen Huang, 2026